Remember when you had to shout commands at your smart speaker just to get it to turn on the lights? That era is officially over. By mid-2026, Voice AI has shifted from clunky keyword matching to genuine conversation. But the real story isn't just that these systems sound more human-it's where they live. The biggest shift in the industry right now is the move toward on-device models, which process your voice locally rather than sending it to a cloud server. This change solves two massive problems at once: privacy concerns and latency.

The End of the 'Cloud Dependency' Era

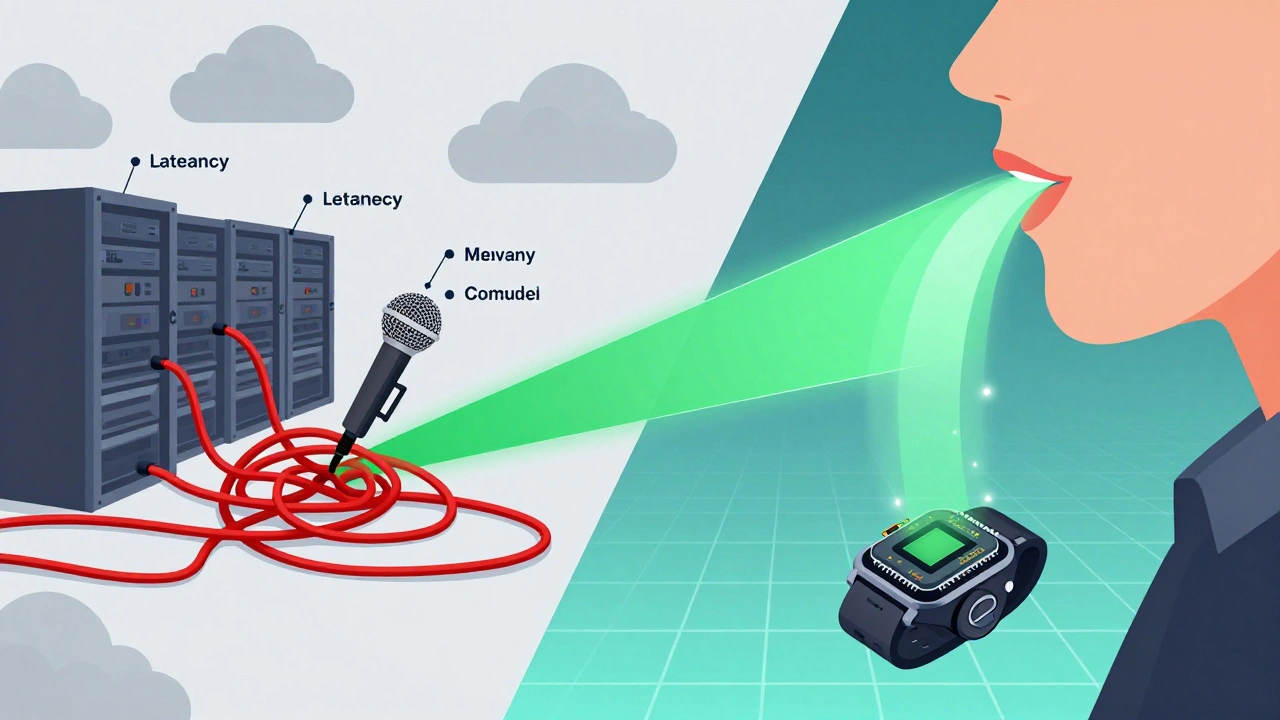

For years, almost every virtual assistant relied on heavy cloud infrastructure. You spoke, your phone recorded the audio, sent it via Wi-Fi to a data center, processed it through a massive neural network, and then sent the answer back. This worked fine for simple tasks like checking the weather. It fell apart when you needed speed or security. If your internet connection dropped, your assistant went silent. More importantly, every word you said traveled across the internet, creating potential vulnerabilities.

Enter Edge AI. This approach moves the intelligence directly onto the hardware you hold in your hand or wear on your wrist. Modern smartphones, laptops, and even budget smartwatches now come with dedicated Neural Processing Units (NPUs). These chips are designed specifically to handle machine learning tasks efficiently. In 2025, major chipmakers like Apple, Qualcomm, and MediaTek released processors capable of running large language models (LLMs) entirely offline. This means your device can understand context, nuance, and complex requests without ever connecting to the internet.

This shift changes how we think about digital assistants. They become personal rather than generic. Because the model lives on your device, it can learn your specific speech patterns, vocabulary, and habits without uploading that sensitive data to a third party. It’s not just faster; it’s safer.

Natural Language Understanding Goes Beyond Keywords

The second major advance is in how these systems understand us. Old-school voice recognition was rigid. You had to say "Okay Google" or "Hey Siri" followed by a clear command. If you mumbled, interrupted yourself, or used slang, the system often failed. Today’s Natural Language Processing (NLP) engines are built on transformer architectures that grasp intent, not just syntax.

Think about how you talk to a friend. You don’t use perfect grammar. You pause, you backtrack, you use filler words like "um" or "you know." Modern Voice AI ignores the noise and focuses on meaning. For example, if you say, "I’m heading to the airport, remind me to grab my passport," an older system might struggle because it doesn’t know what "heading to the airport" implies about time or location. A modern on-device model understands the context. It knows you’re leaving soon, so it sets a reminder for ten minutes from now, tied to your calendar event.

This level of understanding requires significant computational power, which brings us back to the hardware. Running these sophisticated NLP models locally allows for real-time interaction. There’s no lag while the system "thinks." The response feels immediate because the processing happens milliseconds after you stop speaking.

Privacy as a Core Feature, Not a Setting

Let’s be honest: most people were uncomfortable with their conversations being stored on corporate servers. Even with encryption, the idea of a central database containing billions of voice recordings felt invasive. Regulations like GDPR in Europe and CCPA in California forced companies to improve transparency, but they didn’t solve the root issue. Data still left the device.

On-device processing flips this script. When your voice data never leaves your phone, there is nothing to steal in a cloud breach. This is particularly critical for industries handling sensitive information. Doctors using voice-to-text for patient notes, lawyers dictating case strategies, or journalists recording interviews all benefit from local processing. The data stays on the device, encrypted with keys only the user possesses.

This also builds trust. Users are more likely to engage deeply with an assistant they know isn’t listening in for ad targeting purposes. As a result, we’re seeing a rise in "private mode" features becoming the default rather than an opt-in setting. Companies are marketing privacy not as a compliance checkbox, but as a premium feature.

| Feature | Cloud-Based Models (Legacy) | On-Device Models (2026 Standard) |

|---|---|---|

| Latency | High (depends on network speed) | Low (instant local processing) |

| Privacy | Data sent to external servers | Data stays on device |

| Connectivity | Requires active internet | Works offline |

| Personalization | Generic, based on aggregate data | Deeply personalized to user habits |

| Cost Structure | Ongoing subscription/cloud fees | One-time hardware investment |

The Hardware Revolution Enabling Local AI

You can’t run advanced AI on old hardware. The jump to on-device models required a revolution in semiconductor design. We’re no longer just talking about faster CPUs. We’re talking about specialized chips optimized for matrix multiplication-the math behind neural networks.

Qualcomm’s Snapdragon 8 Gen 3 and Apple’s A17 Pro chips include NPUs that deliver tens of trillions of operations per second (TOPS). This efficiency is crucial because battery life matters. Early attempts at on-device AI drained batteries quickly. Newer architectures use dynamic voltage scaling and sparse attention mechanisms to reduce power consumption. Your phone can listen for wake words all day without killing your battery because the low-power core handles the detection, waking up the main NPU only when necessary.

This hardware evolution extends beyond phones. Laptops now integrate these same principles. Microsoft’s Copilot+ PCs, launched in early 2024, popularized the concept of always-on, always-local AI assistants. By 2026, this is standard across premium ultrabooks. Even IoT devices like smart thermostats and security cameras are getting smarter locally, reducing bandwidth usage and improving reliability.

Challenges Still Remain

It’s not all smooth sailing. Moving AI to the edge introduces new challenges. First, storage space. Large language models require gigabytes of memory. While flash storage is cheap, fitting multiple complex models on a single device can be tricky. Developers are responding with model compression techniques, such as quantization, which reduces the precision of numbers in the model without significantly hurting accuracy. This shrinks file sizes by up to 75%.

Second, fragmentation. Unlike the web, where browsers render pages similarly, mobile ecosystems vary wildly. Android devices have different chipsets, RAM configurations, and OS versions. Ensuring a consistent voice AI experience across thousands of device types is a nightmare for developers. Apple’s walled garden makes this easier for iOS users, but Android manufacturers must work harder to optimize performance.

Third, update complexity. With cloud AI, updates happen invisibly on the server. With on-device AI, you need to push new model weights to millions of devices. This requires robust over-the-air (OTA) update mechanisms and careful management of storage resources to avoid filling up users’ phones.

What This Means for Everyday Use

So, what does this actually look like in your daily life? Imagine driving your car. Your cellular signal drops as you enter a tunnel. In the past, your navigation assistant would go dumb. Now, your car’s onboard computer handles rerouting, traffic analysis, and voice commands seamlessly. No interruption.

Or consider working remotely in a coffee shop with spotty Wi-Fi. You dictate an email to your colleague. The on-device model transcribes it, corrects typos, and suggests a tone adjustment-all before you reconnect to the network. When you do connect, the email sends instantly.

We’re also seeing better multilingual support. Cloud models struggled with code-switching (mixing languages in one sentence). On-device models, trained on diverse local datasets, handle this naturally. If you speak English with Spanish phrases, the AI understands the mix without needing to switch contexts manually.

The Future: Collaborative Intelligence

The future isn’t purely on-device or purely cloud-based. It’s hybrid. Experts call this "federated learning." Your device trains a local model on your data. Then, it sends only the anonymized improvements-mathematical adjustments, not raw data-to the central cloud. The cloud aggregates these improvements from millions of devices and sends back a smarter global model. This way, everyone benefits from collective intelligence without sacrificing individual privacy.

This collaborative approach will make Voice AI even more intuitive. Over time, your assistant will anticipate needs before you voice them, based on patterns learned securely on your device. The line between tool and partner will blur further, driven by technology that respects both your time and your privacy.

Do on-device voice models work offline?

Yes, that is their primary advantage. On-device models process voice commands locally using the device's Neural Processing Unit (NPU), so they do not require an internet connection to function. This ensures reliability in areas with poor connectivity, such as airplanes or rural regions.

Is on-device voice AI more secure than cloud-based AI?

Generally, yes. Because your voice data never leaves your device, it is not vulnerable to cloud server breaches or unauthorized access by third parties. The data remains encrypted on your hardware, accessible only by you and the apps you authorize.

Why did voice AI shift to on-device models in 2025/2026?

The shift was driven by advancements in chip technology (NPUs) that made local processing efficient and affordable. Additionally, growing consumer demand for privacy and the need for lower-latency responses pushed manufacturers to adopt edge computing solutions.

Can on-device models understand complex natural language?

Yes, modern on-device models use transformer-based architectures similar to cloud models. They can understand context, slang, interruptions, and multi-step instructions, providing a more natural conversational experience without relying on external servers.

Does running AI on my device drain my battery faster?

Early versions did, but recent NPUs are highly optimized for energy efficiency. Features like wake-word detection use minimal power, and the main processor only activates when necessary. Most users report negligible impact on daily battery life.